There is a question BSA examiners ask that tells them more about your institution than most of what is in your exam prep binder: “Walk me through your decision not to file on this customer.”

The question targets a specific decision, made by a specific analyst, on a specific account. The examiner wants a clear, defensible explanation for why that call was made the way it was.

Most institutions can answer this question if the analyst who made the decision is still on staff and remembers the account. Very few can answer it the same way across their whole team. Fewer still can answer it six months later.

Examiners go further than reviewing outcomes. They want to know whether the reasoning behind your BSA decisions is grounded in written policy, applied consistently across your team, and available when they ask. Those are three separate tests. Passing one does not guarantee passing the others.

That does not mean every no-file decision needs a legal brief. Recent interagency SAR guidance clarified that the BSA and its implementing regulations do not require an institution to document every decision not to file a SAR. When an institution chooses to document that decision, the documentation can be concise and risk-based.

The operational question is still there: when your policy, review process, audit team, or examiner asks for the reasoning, can your team retrieve it quickly and explain it consistently? The FFIEC BSA/AML Examination Manual still focuses examiners on whether the institution has an effective SAR decision-making process, whether differences of opinion are resolved through a defined process, and whether the bank followed its own policies, procedures, and controls.

Two analysts, same case, different call

Put two experienced BSA analysts in front of the same customer profile. Same transaction patterns, same account history, same risk score. Ask them independently whether to file. You will often get different answers.

Both may be defensible. Neither may be plainly wrong. But the inconsistency becomes visible the moment an examiner lines up cases side by side.

Training alone rarely solves this. Both analysts may have completed the same BSA courses. Both may know the FinCEN guidance. The inconsistency usually comes from something harder to manage: they are working from different versions of how your institution interprets its own policy. That interpretation often lives nowhere except in the heads of the people who have been there the longest.

Here is what that looks like in practice. When one analyst was onboarded, a senior BSA officer explained that the institution handles structuring below a certain threshold differently for seasonal agricultural accounts. That guidance never made it into a policy update. It was passed verbally, absorbed differently by different people, and applied differently ever since.

What your case management system actually captures

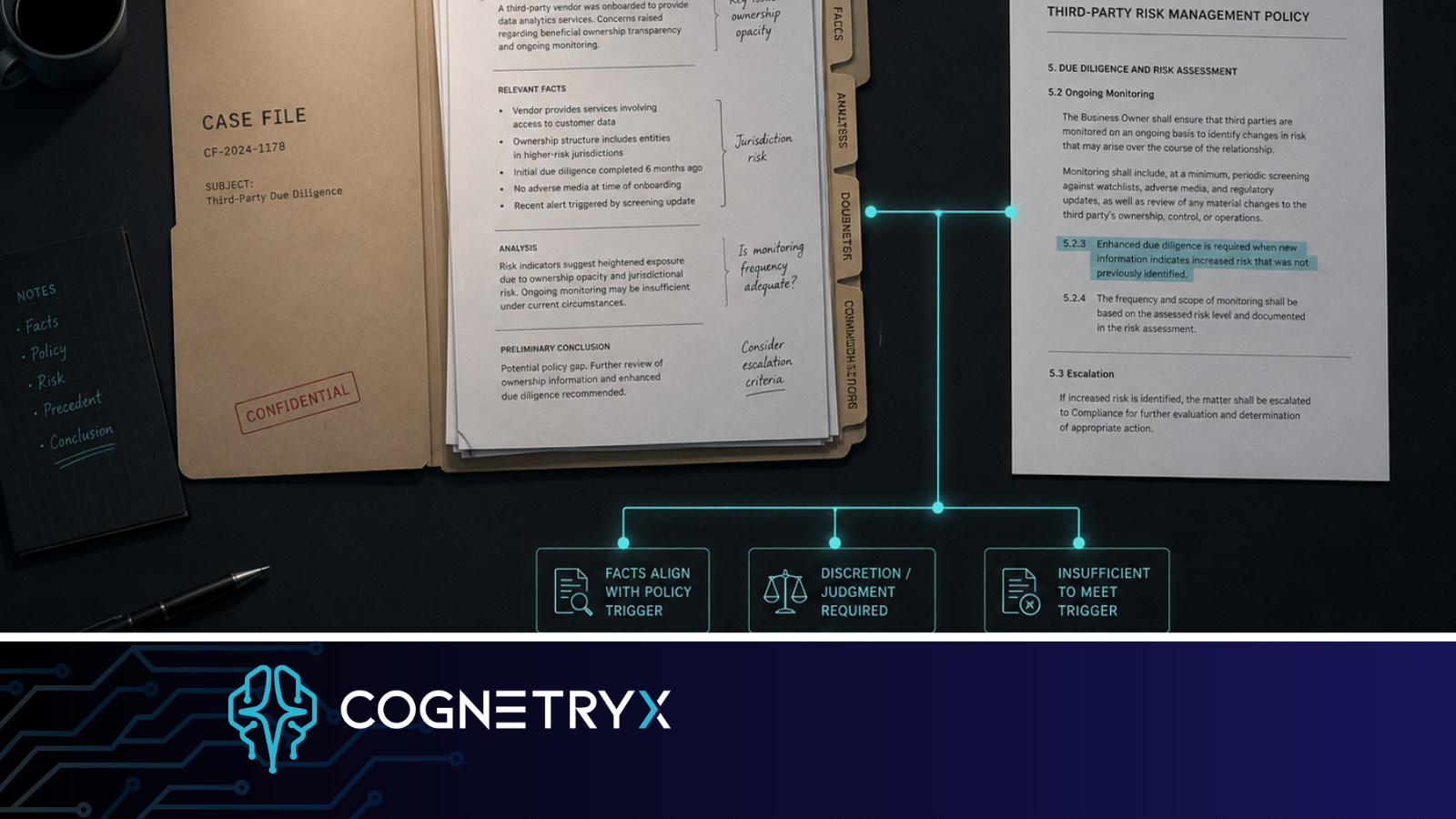

Case management platforms are good at recording what happened. They were not built to capture the full reasoning path: which policy language was applied, which prior decisions the analyst treated as comparable, and how they weighed competing signals before reaching a conclusion.

When an examiner asks about a filing decision, they have already read your case management records. What they are looking for is the reasoning behind those records. The narrative field captures outcome framing. Decision logic is something different, and examiners know that.

Case management records what your team decided. A knowledge system captures how your institution thinks through a decision. Examiners are testing the second thing. When analysts make calls based on norms that exist only in someone’s memory, that gap is where inconsistency starts.

What happens when experienced people leave

BSA teams depend heavily on judgment. When a senior analyst or BSA officer leaves, years of accumulated context can leave with them: the edge cases, the informal guidance, the committee decisions, and the practical reasoning that never made it into a procedure update.

The new analyst trains on the current written policy. That policy may be accurate, but it may not be complete. The unwritten understanding that made the previous team consistent has to be rebuilt through experience over months. During that window, decision quality can become less consistent than leadership realizes.

That is why continuity matters. The issue is not whether one person knows the answer. The issue is whether the institution can preserve the reasoning behind the answer after roles change, teams grow, or exam questions arrive long after the original case was closed.

What “traceable” actually means in BSA

When compliance officers talk about traceable decisions, they usually mean an audit log in their case management system. That matters, but it is only part of the answer. A truly traceable BSA decision means your team can show four things:

- Which version of which policy the analyst applied

- That similar profiles have been treated consistently across your team

- That the reasoning reflects your current written guidance, not how someone explained it three years ago

- That the answer to “why” is the same whether the question comes today or eighteen months from now

Documenting the outcome does not get you there by itself. What helps is making your institutional knowledge current, searchable, and grounded in your own documentation rather than whatever an external AI tool happens to know about BSA generally.

Your staff may already be using AI tools to research questions and draft language. The issue is whether those tools are pulling from your policy, your procedures, and your prior decisions, or from a generic training set. Those produce very different answers when an examiner starts asking.

What actually changes when your policy library is searchable

The tool is less important than what it knows. An analyst with a general AI tool and an analyst with one that has indexed your BSA policy, SAR narrative library, internal guidance memos, and compliance committee decisions are doing different work, even if the interface looks the same.

When an analyst asks how your policy defines structuring for business accounts with irregular cash cycles, the answer should come from your documents. A synthesis of public guidance may be useful background, but it is not the same as your institution’s approved standard. One answer supports your decision record. The other can create uncertainty.

When that system runs inside your institution, inside your own environment and under your own controls, the consistency problem and the confidentiality problem are addressed together. Your analysts are working from the same approved sources, so their reasoning stays more consistent. Your member and customer data stays inside your environment.

That is what examiner-ready looks like for a BSA team: decisions that trace back to the same written guidance, applied the same way, by anyone who pulls up the case.

See What This Looks Like for Your BSA Team

Cognetryx deploys inside your institution’s environment and indexes your approved BSA policies, procedures, and case history. Your team’s decisions stay grounded in your own documentation. Sensitive data stays inside your controlled environment.

Book a Free AI Strategy Assessment →